|

12/11/2023 0 Comments Torch nn sequential pointerBatchNorm2d( 512, momentum = BATCH_NORMALIZATION_MOMENTUM),īlock4_1 = BaseBlock( input_channel = 256, output_channel = 512, stride = 2, shortcut = block4_1_shortcut)īlock4_2 = BaseBlock( input_channel = 512, output_channel = 512, stride = 1, shortcut = None) BatchNorm2d( 256, momentum = BATCH_NORMALIZATION_MOMENTUM),īlock3_1 = BaseBlock( input_channel = 128, output_channel = 256, stride = 2, shortcut = block3_1_shortcut)īlock3_2 = BaseBlock( input_channel = 256, output_channel = 256, stride = 1, shortcut = None)

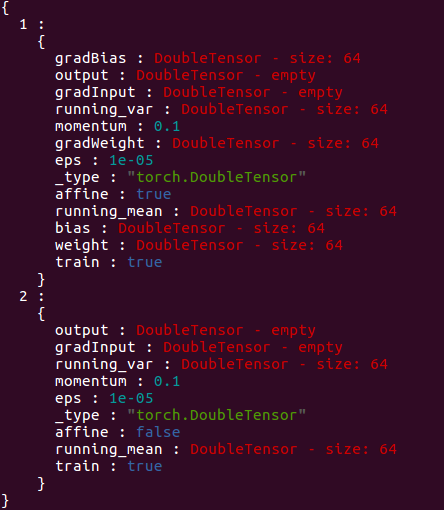

BatchNorm2d( 128, momentum = BATCH_NORMALIZATION_MOMENTUM),īlock2_1 = BaseBlock( input_channel = 64, output_channel = 128, stride = 2, shortcut = block2_1_shortcut)īlock2_2 = BaseBlock( input_channel = 128, output_channel = 128, stride = 1, shortcut = None) # group1 block1_1_shortcut = None block1_1 = BaseBlock( input_channel = 64, output_channel = 64, stride = 1, shortcut = block1_1_shortcut)īlock1_2 = BaseBlock( input_channel = 64, output_channel = 64, stride = 1, shortcut = None) BatchNorm2d( 64, momentum = BATCH_NORMALIZATION_MOMENTUM) Skip = input output += skip output = self. stride = stride def forward( self, input): BatchNorm2d( output_channel, momentum = BATCH_NORMALIZATION_MOMENTUM) Conv2d( output_channel, output_channel, kernel_size =( 3, 3), stride =( 1, 1), padding =( 1, 1), bias = False) Conv2d( input_channel, output_channel, kernel_size =( 3, 3), stride =( stride, stride), padding =( 1, 1), bias = False)

Module):Įxpansion = 1 def _init_( self, input_channel, output_channel, stride = 1, shortcut = None): nn as nn BATCH_NORMALIZATION_MOMENTUM = 0.1 class BaseBlock( nn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed